Two new experiments display that the general public don’t even believe {that a} private message may well be AI-generated, even if they themselves use synthetic intelligence to jot down.

An AI-generated fictional apology despatched by the use of textual content used to be one of the vital messages contributors evaluated in a up to date find out about.

Zhu & Molnar (2026)

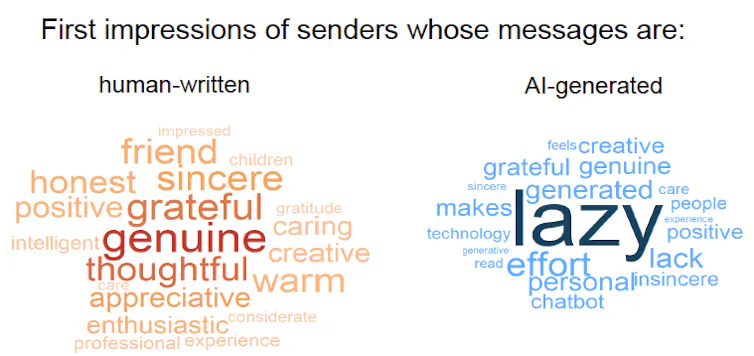

We discovered a transparent “AI disclosure penalty.” When other people knew a message used to be AI-generated, they rated the sender a lot more negatively – “lazy,” “insincere,” “lack of effort” – than once they believed that the similar textual content used to be written by way of an individual – “genuine,” “grateful,” “thoughtful.”

However here’s the twist: The contributors who weren’t advised the rest about authorship shaped impressions that have been simply as certain as the ones from individuals who have been advised the messages have been in actuality human.

This entire loss of skepticism shocked us – and it raises new questions. Perhaps contributors weren’t acquainted sufficient with AI to comprehend that nowadays’s fashions can produce detailed and private messages. (They are able to.) Or most likely contributors have by no means used AI themselves. (They most likely have.) So we additionally examined whether or not contributors’ personal AI use modified how they judged senders.

To our even larger marvel, we discovered little to no impact. Individuals who use generative AI somewhat regularly of their day-to-day lives – a minimum of each different day – did penalize AI use rather much less when AI authorship used to be disclosed, when compared with individuals who by no means or infrequently use AI. However contributors have been not more skeptical by way of default: When authorship used to be now not disclosed, heavy AI customers, mild AI customers and nonusers all tended to think the textual content used to be written by way of an individual and shaped necessarily the similar impressions.

Phrase clouds depict contributors’ first impressions of senders who wrote messages themselves, left, and those that used AI, proper.

Andras Molnar

Why it issues

Loss of skepticism and a loss of damaging impressions subject as a result of other people make social judgments from textual content at all times. Recipients believe taking the effort and time to ship written messages as an perception into the creator’s sincerity, authenticity or competence, and the ones impressions form other people’s choices in friendships, relationship and paintings.

But our primary findings disclose a placing disconnect: Other people normally don’t suspect AI use until it’s glaring. This unawareness creates an ethical quandary: Individuals who use AI in secret can revel in the advantages whilst dealing with nearly no chance of detection. In the meantime, ironically, people who find themselves prematurely and admit to the use of AI undergo a reputational hit.

Through the years, loss of skepticism and consciousness may just reshape what writing approach in on a regular basis lifestyles. Readers would possibly learn how to deal with writing as a much less dependable sign of any individual’s persona or effort, and as an alternative depend on different varieties of communique. As an example, standard AI use has already triggered employers to cut price the price of canopy letters from process candidates. As a substitute, they’re depending extra on private suggestions from an applicant’s present manager or connections made via in-person networking.

What different analysis is being completed

Different researchers have documented a variety of damaging impressions about individuals who expose their AI use. Research display it makes process candidates appear much less fascinating and staff appear much less competent. Readers of inventive writing understand AI customers as much less inventive and inauthentic. Other people see private apologies and company apologies that stem from AI as much less efficient. On the whole, disclosing AI use decreases agree with and undermines legitimacy.

But with out disclosure, there’s transparent proof that the general public can’t reliably come across AI-generated textual content, even with the assistance of detection equipment, particularly when the textual content is a mixture of human-written and AI-generated content material. Even if other people really feel assured about their skill to identify AI textual content, their self belief could also be not anything greater than a self-affirming phantasm.

What’s subsequent

Even if our experiments didn’t disclose suspicion of AI use, that doesn’t imply other people by no means suspect it in the true global. In some settings, other people might already be hypervigilant about AI. Use in academia is an glaring instance. In our subsequent research, we need to perceive when and why other people naturally begin to suspect AI use, and what flips the transfer between agree with and doubt.

The Analysis Transient is a brief tackle fascinating instructional paintings.